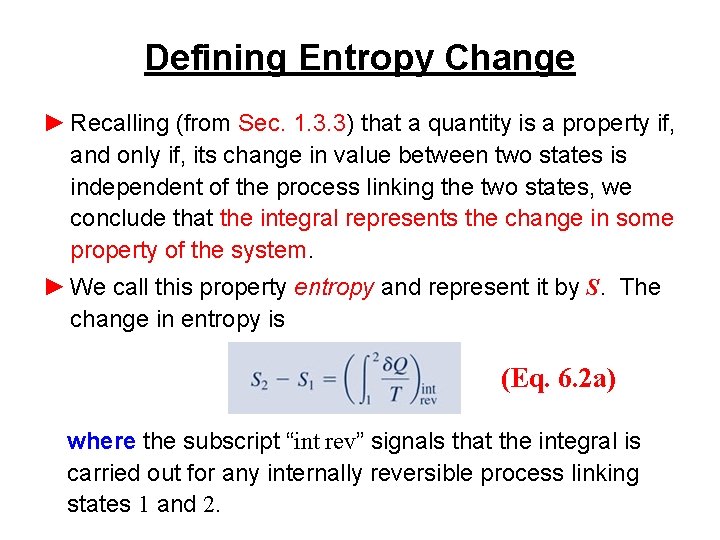

Clausius derived the equation, which is equivalent to the second law of thermodynamics for equilibrium processes, by considering an arbitrary cyclic process as the sum of a very large (approaching infinity as a limit) number of elementary reversible Carnot cycles ( see CARNOT CYCLE). Thus, for a thermodynamic system undergoing a cyclic process that is quasistatic (infinitesimally slow), in which the system gradually acquires small amounts of heat δQ at corresponding absolute temperatures T, the integral of the “reduced” amount of heat δQ/T throughout the cycle is equal to zero: ∮δQ/T = 0 (the Clausius equality). The law can be given a rigorous mathematical formulation if we introduce a specific function of state-entropy.

Clausius (1865), who showed that the process of the conversion of heat to work follows a general physical principle, the second law of thermodynamics ( see THERMODYNAMICS, SECOND LAW OF).

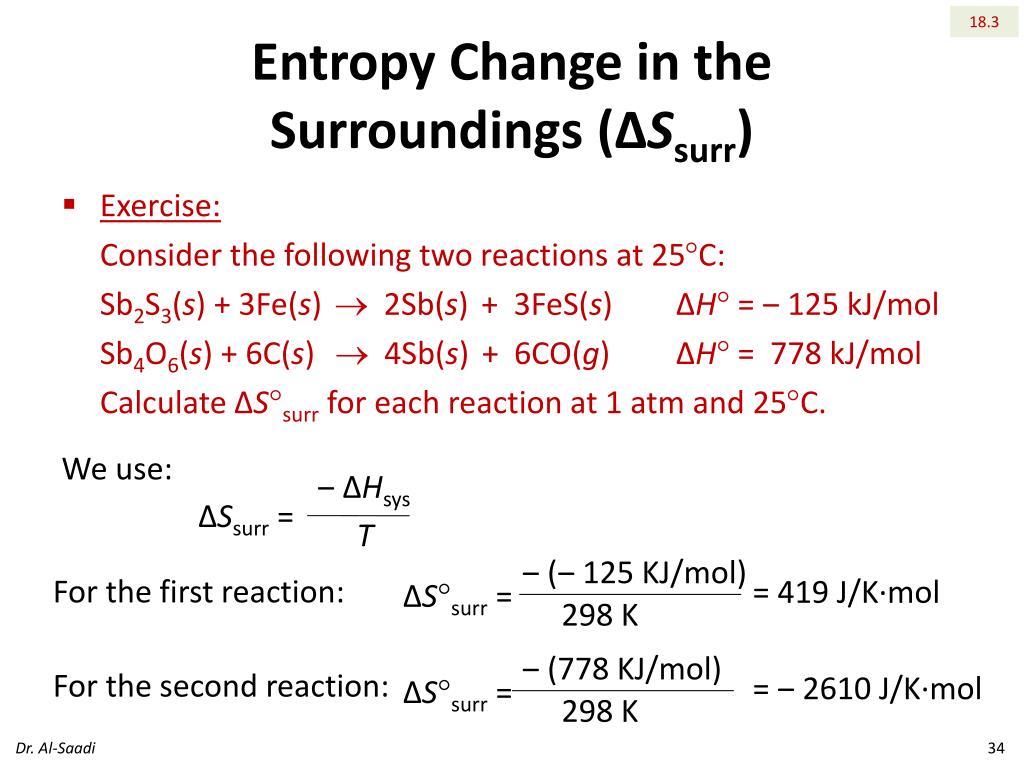

The concept of entropy was introduced in thermodynamics by R. For example, all the most important principles of statistical mechanics can be deduced on the basis of the conceptions of entropy in information theory. These interpretations of entropy have a profound intrinsic relationship. Entropy is also extensively used in other branches of science: in statistical mechanics as a measure of the probability of the realization of some macroscopic state and in information theory as a measure of the uncertainty of some experiment or test, which may have different outcomes. See Conservation of energy, Thermodynamic processesĪ concept first introduced in thermodynamics to define the measure of the irreversible dissipation of energy ( see THERMODYNAMICS). The entropy has been created from the work input, and this process could be continued indefinitely, creating more and more entropy. Since everything but the water is unchanged, this equation also represents the total entropy increase. The entropy increase of the water at its temperature T is Δ S = Q/ T = W/ T. Provided the process was carried out slowly, the temperature difference between the blocks and the water would be small, and heat transfer could be considered a reversible process. Work ( W) had been converted into heat ( Q) with 100% efficiency. When the experiment was completed, the apparatus remained unchanged except for a slight increase in the water temperature. By this and similar experiments, he established numerical relationships between heat and work. Joule caused work to be expended by rubbing metal blocks together in a large mass of water. In his study of the first law of thermodynamics, J. In information theory the term entropy is used to represent the sum of the predicted values of the data in a message. However, such an occurrence is so improbable as to be impossible from a practical point of view.

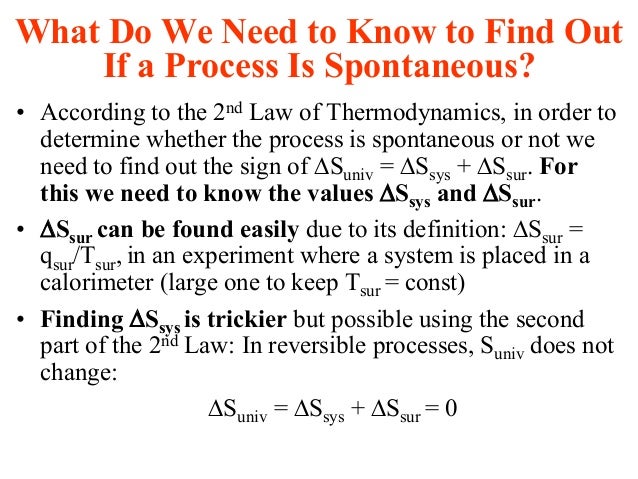

It should be noted that the second law of thermodynamics is statistical rather than exact thus there is nothing to prevent the faster molecules from separating from the slow ones. This means that although energy cannot vanish because of the law of conservation of energy (see conservation laws), it tends to be degraded from useful forms to useless ones. In other words the entropy of the universe as a whole tends toward a maximum. According to the second law of thermodynamics, during any process the change in entropy of a system and its surroundings is either zero or positive. Thus the entropy of the system has increased. Although no energy has been lost by the heat transfer, the energy can no longer be used to do work. Furthermore, the combined lukewarm bodies cannot unmix themselves into hot and cold parts in order to repeat the process. This heat flow can be utilized by a heat engine (device which turns thermal energy into mechanical energy, or work), but once the two bodies have reached the same temperature, no more work can be done. If the bodies are placed in contact, heat will flow from the hot body to the cold one. For example, consider a system composed of a hot body and a cold body this system is ordered because the faster, more energetic molecules of the hot body are separated from the less energetic molecules of the cold body. Originally defined in thermodynamics in terms of heat and temperature, entropy indicates the degree to which a given quantity of thermal energy is available for doing useful work-the greater the entropy, the less available the energy. Entropy (ĕnˈtrəpē), quantity specifying the amount of disorder or randomness in a system bearing energy or information.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed